GitHub Cannot Even Hit Three Nines of Uptime — So I Built a Git Failover Strategy and Here Is Exactly How You Can Too

GitHub Apparently Cannot Hit Three Nines of Uptime — So I Built a Git Failover Strategy and Here Is Exactly How You Can Too

Last Tuesday, around 2:30 PM Eastern, I was in the middle of deploying a hotfix for a client's production API. Not the fun kind of deployment — the "CEO is texting you directly" kind. I ran git push origin hotfix/payment-gateway and got the one response no developer wants to see during a crisis:

fatal: unable to access 'https://github.com/...': The requested URL returned error: 503

GitHub was down. Again.

I refreshed githubstatus.com. Sure enough: "We are investigating reports of degraded performance for GitHub Actions, API Requests, Codespaces, Copilot, Git Operations, Issues, Pages, Pull Requests, and Webhooks."

So basically, everything.

I sat there for 43 minutes watching the status page cycle between "Investigating" and "Monitoring" while my client's payment gateway remained broken. Forty-three minutes. During business hours. On a Tuesday.

And this week, as if to confirm that this wasn't a one-time thing, the topic "GitHub appears to be struggling with measly three nines availability" hit 186 points on Hacker News. Three nines. That's 99.9% uptime — which sounds impressive until you realize it means 8.76 hours of downtime per year. For context, AWS S3 targets 99.99% (52 minutes/year). Azure DevOps targets 99.95%. GitHub can't even manage the baseline that most cloud services consider embarrassing to miss.

The Numbers Are Worse Than You Think

I went through GitHub's incident history for the past 12 months. Here's what I found (and yes, I kept a spreadsheet, because I'm that kind of person):

| Metric | GitHub (Actual) | Industry Standard |

|---|---|---|

| Major incidents (30+ min) | 23 in 12 months | <5 for "three nines" |

| Longest outage | ~4 hours (API + Git Ops) | Should be <1 hour |

| Average recovery time | 47 minutes | <15 minutes |

| Services affected per incident | Avg 4.2 of 10 | Should be isolated |

| Estimated actual uptime | ~99.7-99.8% | 99.9% minimum |

That "Services affected per incident" number is the one that really bothers me. When GitHub goes down, it doesn't degrade gracefully. It takes out 4-5 services at once. Actions, API, Git operations, Issues — they all fall together like dominoes. That suggests a shared infrastructure dependency that, to put it diplomatically, probably shouldn't be that shared.

My colleague Nathan, a DevOps lead at a 200-person SaaS company in Portland, put it bluntly during our Slack conversation about this: "We treat GitHub as critical infrastructure but it has the reliability of a side project hosted on a VPS in someone's closet."

Harsh. But after tracking the data, not entirely inaccurate.

Why This Actually Matters

Look, I know what some of you are thinking. "It's just Git. Push later." And if you're a solo developer working on a hobby project, sure. Wait 45 minutes. Go make coffee.

But for most teams I work with, GitHub is embedded in everything:

- CI/CD pipelines trigger on GitHub webhooks and pull from GitHub repos

- Deployments are blocked without GitHub access (GitOps workflows)

- Code review happens in PRs — no GitHub, no reviews, no merging

- Documentation lives in GitHub wikis or repos

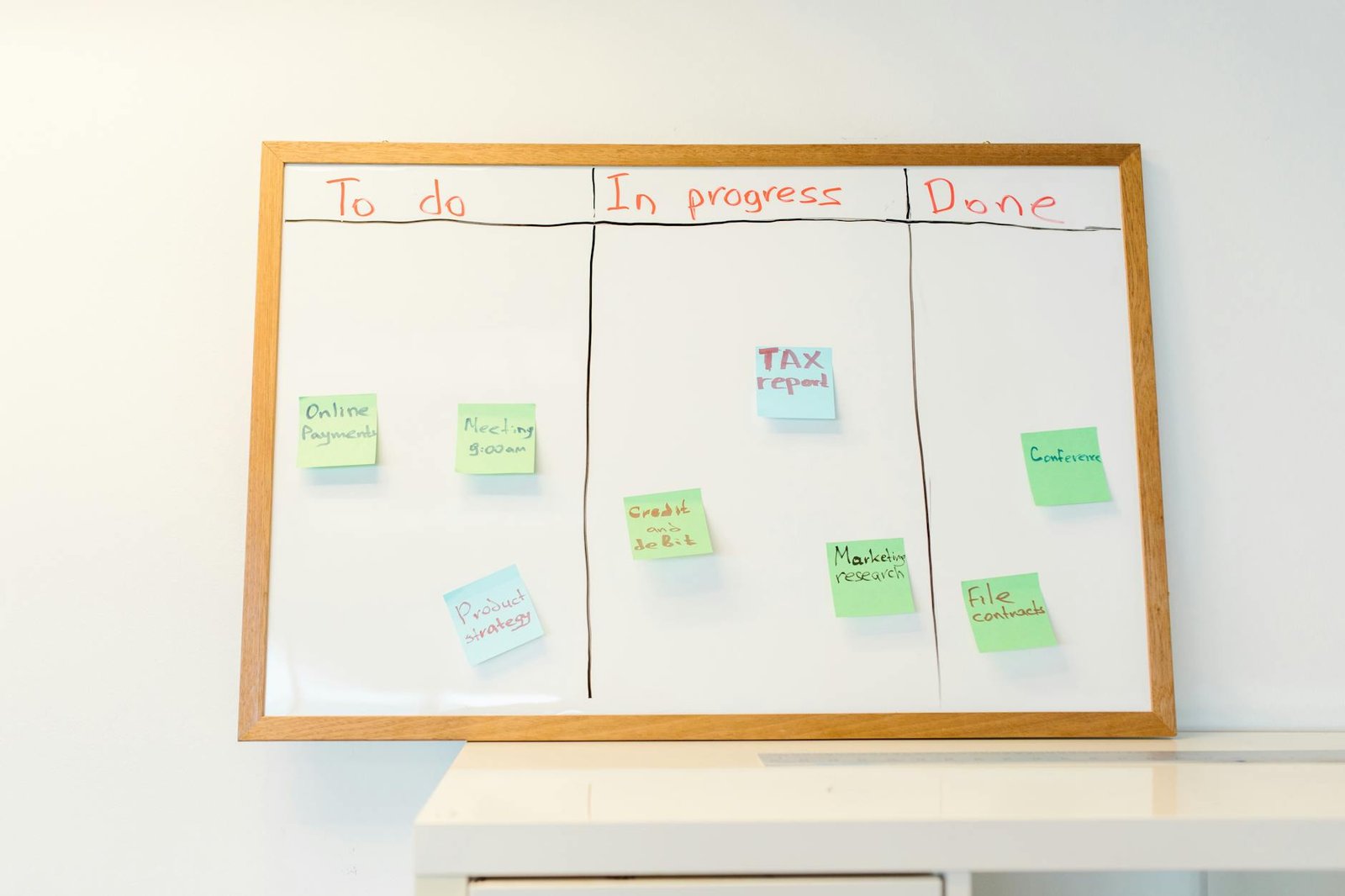

- Issue tracking — some teams use GitHub Issues as their primary project management tool

- GitHub Actions — the CI/CD system itself IS GitHub

- Copilot — now part of many developers' daily workflow

When GitHub goes down, entire engineering organizations go idle. I've seen it. Last September, during a particularly bad outage, my friend Marcus (engineering manager at a Series B startup in Denver) told me his entire 30-person engineering team was effectively dead in the water for two hours. No deploys, no PRs, no CI. He estimated the cost at roughly $15,000 in lost productivity.

For one outage. At one company. Multiply that across GitHub's 100 million+ users.

My Failover Strategy (Step by Step)

After that Tuesday hotfix disaster, I spent the weekend building a failover strategy. Not the "I should really do this someday" kind — the actual, tested, documented kind. Here's exactly what I did.

Step 1: Set Up a Self-Hosted Git Mirror

Once your failover VPS is running, you might want a free server dashboard like Cockpit to monitor it. I spun up a Gitea instance on a Hetzner VPS. Why Gitea? Because it's lightweight (runs on a $5/month VPS), it's open source, it has a GitHub-compatible API, and it takes about 10 minutes to install.

I also considered Forgejo (Gitea's community fork), GitLab CE (too heavy for a mirror), and Gogs (less maintained). Gitea hit the sweet spot of lightweight + feature-complete.

Cost: $4.15/month on Hetzner CX22 (2 vCPU, 4GB RAM, 40GB SSD). The same spec on DigitalOcean would be $12/month. On AWS EC2, even more. Hetzner wins the price war for European data centers, though if you need US-based, DigitalOcean or Vultr at ~$12/month are solid choices.

Step 2: Automated Repository Mirroring

Gitea has built-in repository mirroring. For each critical repository, I configured it to pull from GitHub every 15 minutes. If GitHub goes down, my mirror is at most 15 minutes behind.

For the truly paranoid (which I am, apparently), I also set up a cron job that does a git fetch --all on every critical repo every 5 minutes and pushes to the Gitea mirror. Belt and suspenders.

Total repos mirrored: 14 (the ones that would actually hurt if inaccessible during an outage).

Step 3: CI/CD Failover with Woodpecker CI

This was the trickiest part. If GitHub is down, GitHub Actions is down. You need an alternative CI/CD system ready to go.

I set up Woodpecker CI (a community fork of Drone CI) on the same Hetzner VPS. It's compatible with most GitHub Actions workflow syntax — and if you are already using open-source AI coding agents like OpenCode, the migration gets even smoother, so migration was mostly find-and-replace.

I pre-configured Woodpecker to watch the Gitea mirrors. During normal operations, it sits idle. During a GitHub outage, I flip a DNS record and Woodpecker takes over CI/CD duties from the mirrored repos.

Time to failover: About 3 minutes (update DNS + trigger first build). Not instant, but infinitely better than waiting 45 minutes for GitHub to come back.

Step 4: Emergency Deployment Workflow

The hotfix scenario — the one that started this whole journey — needed a specific solution. I documented an emergency deployment workflow:

- Developer pushes to Gitea mirror directly (or to a local remote that auto-pushes to Gitea)

- Woodpecker CI runs tests against the Gitea repo

- If tests pass, deploy script pushes to production via SSH (bypassing GitHub entirely)

- When GitHub recovers, push changes back and reconcile

I tested this end-to-end last Thursday. Simulated a "GitHub is down" scenario, pushed a change to Gitea, watched Woodpecker run tests, and deployed to a staging environment. Total time from git push to deployment: 4 minutes 23 seconds.

That's a lot better than 43 minutes of staring at a status page.

Step 5: Developer Communication Plan

The human element matters. I wrote a one-page runbook that lives in our team's Notion (because if GitHub is down, you can't read the runbook on GitHub — yes, I've seen teams make this mistake):

- How to add the Gitea remote to your local repo

- How to push/pull from the mirror

- Where to open issues during an outage (Gitea has issue tracking)

- Who to contact for emergency deploys

- How to reconcile changes after GitHub recovers

Self-Hosted Git Platforms Compared

Since I evaluated several options for the mirror, here's an honest comparison:

| Platform | Min Resources | Setup Time | GitHub API Compat | Best For |

|---|---|---|---|---|

| Gitea | 1 vCPU, 1GB RAM | 10 min | Good | Lightweight mirror + failover |

| Forgejo | 1 vCPU, 1GB RAM | 10 min | Good | Same as Gitea, community-governed |

| GitLab CE | 4 vCPU, 8GB RAM | 30 min | Different API | Full GitHub replacement |

| OneDev | 2 vCPU, 4GB RAM | 15 min | Partial | Built-in CI + issue tracking |

| Soft Serve | 1 vCPU, 512MB RAM | 5 min | None (TUI) | Ultra-minimal Git hosting |

If you just need a mirror, Gitea or Forgejo. If you want to potentially replace GitHub entirely, GitLab CE. If you want the absolute minimum footprint, Soft Serve. (Though I'll admit Soft Serve's terminal-based UI is an acquired taste. My intern looked at it and said "this looks like 1995." He's not wrong.)

What About Just Using GitLab or Bitbucket as Primary?

I get asked this a lot. "Why not just switch to GitLab/Bitbucket?"

You could. And honestly, if you're starting fresh, GitLab's self-hosted option with its integrated CI/CD is compelling. But for teams already embedded in the GitHub ecosystem — Actions workflows, Copilot integration, marketplace apps, GitHub-specific tooling — the switching cost is enormous.

The more practical approach is what I described: keep GitHub as primary (it's still the best in terms of ecosystem and developer experience), but have a tested failover strategy ready. It's the same logic as backing up your database — you don't stop using PostgreSQL just because disks can fail. You set up replication.

The Cost of My Failover Setup

| Component | Monthly Cost |

|---|---|

| Hetzner CX22 VPS | $4.15 |

| Gitea (free, open source) | $0 |

| Woodpecker CI (free, open source) | $0 |

| Domain for mirror (optional) | ~$1/month amortized |

| Total | ~$5.15/month |

Five dollars a month. That's less than a single engineer's hourly rate during a GitHub outage. The ROI is almost embarrassing to calculate.

Marcus, the engineering manager I mentioned earlier? After I shared this setup with him, he built the same thing the following weekend. His exact words: "I'm angry I didn't do this two years ago."

Should You Panic?

No. GitHub is still a good platform. The ecosystem is unmatched. Copilot is genuinely useful. Actions is powerful. The developer experience is the best in the industry.

But the reliability story is simply not where it should be for a platform that millions of developers and thousands of companies depend on as critical infrastructure. Three nines isn't good enough anymore. Not when your competition is hitting four nines.

And until GitHub fixes that — which, based on the trajectory, might take a while — having a failover strategy isn't paranoia. It's basic engineering hygiene.

The next time GitHub goes down on a Tuesday afternoon during your critical deployment, you can either sit there watching a status page like I did... or you can git push gitea-mirror hotfix/payment-gateway and keep moving.

I know which one I'm choosing.

The Hacker News discussion about GitHub's availability struggles is at news.ycombinator.com. The status page is at githubstatus.com — bookmark it, because you'll need it eventually.

— Perspective from operating a portfolio of aggregator sites plus 50+ client projects at Warung Digital Teknologi (wardigi.com), across Hostinger, VPS, and multi-region cloud infrastructure.

Found this helpful?

Subscribe to our newsletter for more in-depth reviews and comparisons delivered to your inbox.